When AI produces PhD-level mathematics in an hour

WORDPRESS.COM

We’re all acutely aware of the impact AI is having on our field of expertise, that of software engineering, and are experiencing a mixture of amazement and unease as a result. However, AI is having a growing impact on other fields, especially those with similar characteristics to ours (e.g. machine provable correctness).

This blog post, a member of the Department of Pure Mathematics and Mathematical Statistics at Cambridge University, describes his recent experience with GPT 5.5 Pro:

“ChatGPT 5.5 Pro […] produced a piece of PhD-level research in an hour or so, with no serious mathematical input from me.”

Recent reports have been shared of LLMs solving a number of previously unsolved Erdős problems - tricky mathematical puzzles posed by the famous mathematician Paul Erdős, often easy to state but very hard to solve. But it’s really interesting to read the first-hand experience of a practicing mathematician.

The main body of this post goes way over my head - in brief, he used GPT 5.5 Pro to make material progress on a number theory problem (via a 17 min and 23 min session), which was validated by a peer.

“I would judge the level of the result that ChatGPT found in under two hours to be that of a perfectly reasonable chapter in a combinatorics PhD.”

The final section of this post is the most interesting, were his colleague ponders the long-term impact of students. The lower bound for contribution has risen, given that AI can solve the “gentle problems”. He ponders whether this generalises to other fields of mathematics, but also reflects that the capability of AI is increasing so rapidly that the answer to that question may change imminently. Finally, he answers the question “does studying mathematics still make sense?”

“By doing research in mathematics, you may not get the same rewards as your equivalents a generation ago, but there is a good chance that you will be equipping yourself very well for the world we are about to experience.”

There are clear parallels between each of his points, and the experiences in our field.

You Need AI That Reduces Maintenance Costs

JAMESSHORE.COM

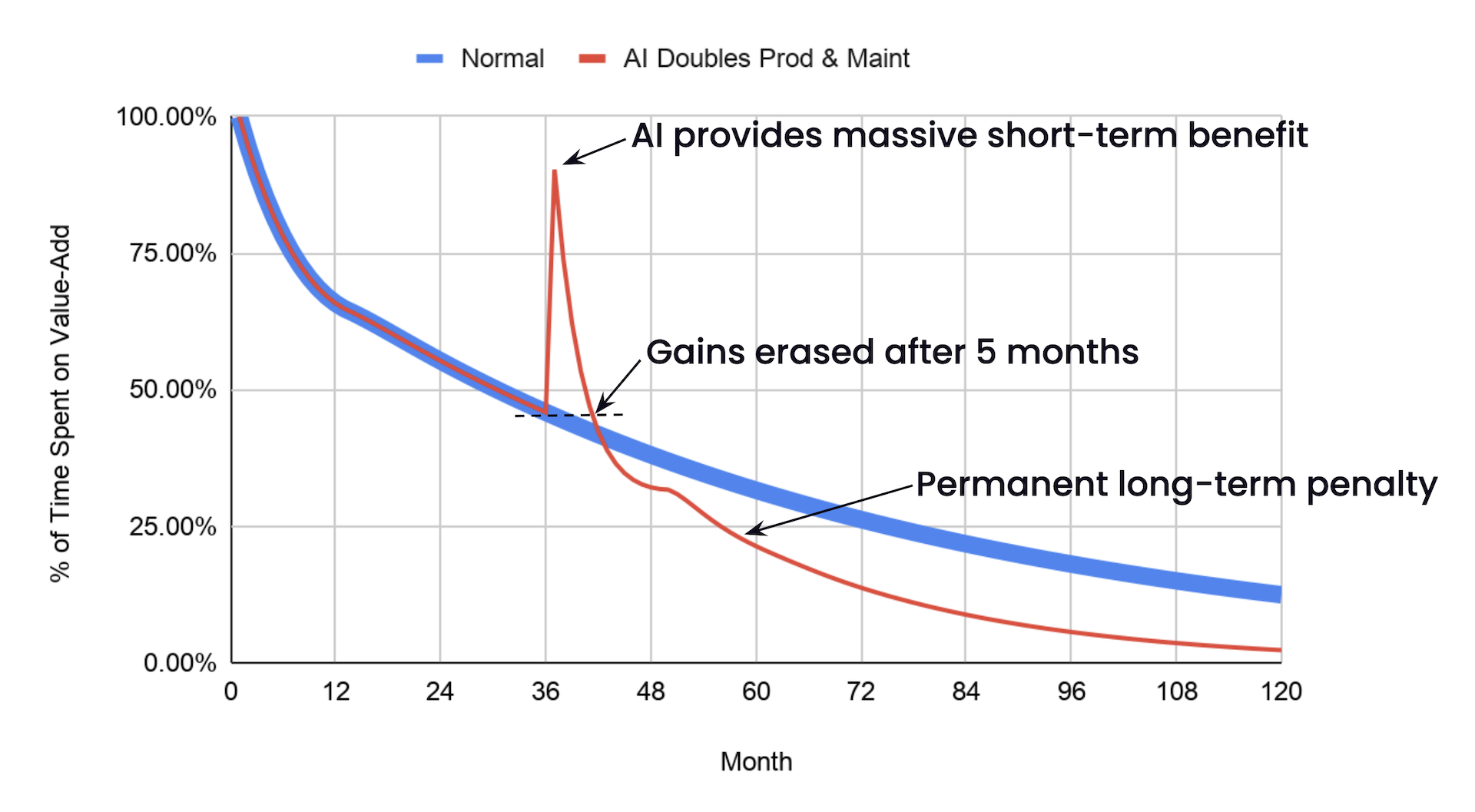

This blog post make a simple, yet important, point very clearly. With every line of software you write there is an associated future maintenance cost. While this is very small at first, on the glorious early-days of a project, it grows over time due to a compounding effect.

Eventually you end up spending more time on maintenance than features. Of course there are things you can do to mitigate this, fixing “tech debt” or migrating away from now-legacy technologies. However, in practice, this debt pay-off doesn’t happen all that often.

With AI, you can rapidly accelerate the amount of code being delivered. If we assume the quality drops, with ma doubling of maintenance burden - which might sound pessimistic, but if you’re vibe-coding this is probably optimistic. The gains from acceleration are lost in just 5 months:

This is a very useful cautionary tale, and is of course avoidable if you pay attention to quality. As the author states:

“You have to invert your productivity. If you’re producing twice as much code, you need code that costs half as much to maintain. Three times as much code, one third the maintenance.”

Great advice.

Mythos finds a curl vulnerability

HAXX.SE

Last month Anthropic’s (non) release of Mythos, caused a media storm and even helped Anthropic rebuild its relationship with the white House. The argument for not making this a public release was the unparalleled ability of this model to uncover security flaws in software, something that could be exploited by bad actors.

Daniel is the lead maintainer of curl, a tool that has billions of users and is considered open source “critical infrastructure”. Through Linux Foundations “Project Glasswing” his codebase was scanned by Mythos, and the results were somewhat underwhelming. Just one minor security vulnerability was discovered. Whereas previous security scans had found a significantly higher number (which are now addressed).

I’m not going to linger on the Mythos story here, yes, it was hyped - that is no great surprise. What is much more interesting is how effective AI-powered security scanning and code review tools are. Daniel mentions that:

“These tools and the analyses they have done have triggered somewhere between two and three hundred bugfixes merged in curl through-out the recent 8-10 months or so”

That’s an amazing result, and highlights an incredibly valuable use case for LLMs. If you’re not using AI tools for security scanning your codebase, you should be!

The bun re-write in Rust has merged

GITHUB.COM

Bun is a popular JavaScript / TypeScript bundler, runtime and package manager that was originally written in Zig - a modern systems programming language and alternative to C. At the start of this year Anthropic acquired Bun, in order to start building a more complete developer ecosystem, and hire some great talent.

A couple of weeks ago an experimental re-write of Bun in Rust appeared, with a 99% test pass. This caused a lot of lively debate on Hacker News, with the Bun maintainer stepping in with this to say:

This whole thread is an overreaction. 302 comments about code that does not work. We haven’t committed to rewriting. There’s a very high chance all this code gets thrown out completely.

But just two weeks later, the work was complete and Bun is now built on Rust, not Zig. A very popular (90k star) open source project, with millions of users, flipping language in just a couple of weeks is astonishing. An amazing technical feat, but why?

This may be due to the Zig project having a very strong anti-LLM policy. The Bun team were already operating their own fork of Zig in order to performance optimise for their project, but had no plans to upstream this change due to the anti-LLM policy. With Bun having been acquired by Anthropic, this was not a good look.

What might this mean for your own migration projects? The bun example is something of a perfect challenge. A comprehensive test suite, and also a lot of human time and effort invested to shape the product. It is an upper asymptote. However, there is a lot that can be learnt from this overall approach when using AI in less ideal environments, for example, a more ‘messy’ migration where tests are lacking, functionality is added or changed. It is still possible to create an environment (through harness engineering) where AI can tackle sizeable tasks - at speed.

I Build a Tool to Figure Out What Was Waking Me Up at Night

MARTIN.SH

One of the great hings about AI is just how easy it is to throw together a “personal” application. I’ve already written a few, including an app for logging Karting sessions, an app which delivers an improved interface for Salesforce, a tool that automatically creates photo albums (using a varierty of image processing techniques) and many more. It is a great fit for when you care more about solving a problem than how that problem is solved.

This blog post shares a great example - a tool that helped the author work out what was waking them up at night:

AI is what made it realistic for me to build this in a weekend

Both fun and useful.