The West forgot how to make things, now it’s forgetting how to code

TECHTRANCHES.DEV

This is an interesting blog post that warns of the risk we are running around collective loss of knowledge, and just how long it takes to rebuild.

It starts with a few military examples, how long it took to restart Stinger missile production to support Ukraine, the challenge of re-learning how to produce Fogbank for nuclear warheads. All are examples of how hard it is to regain knowledge once it is lost. The fact that Artemis wasn’t a trivial exercise is probably another good example.

What does this have to do with code?

“I read the Fogbank story and recognized it immediately. Not the nuclear material. The pattern. Build capability over decades. Find a cheaper substitute. Let the human pipeline atrophy. Enjoy the savings. Then watch it all collapse when a crisis demands what you optimized away.”

This might sound rather extreme, however, we are becoming increasingly disconnected from the line-by-line detail of the code. And if, all of a sudden, we find ourselves in a situation where this detail knowledge is needed, we may struggle.

This post quite rightly points out that this is a greater challenge for junior engineers who may not gain this knowledge in the first place.

“It’s Fogbank for code. When juniors skip debugging and skip the formative mistakes, they don’t build the tacit expertise. And when my generation of engineers retires, that knowledge doesn’t transfer to the AI.”

There is much I agree with in this blog post. However, I am sure the knowledge will still exist, but in shorter supply. This will lead to localised failures, rather than industry-wide collapse.

A.I. Should Elevate Your Thinking, Not Replace It

KOSHYJOHN.COM

This next post is a useful antidote to the one above …

Koshy has observed two types of AI user, one who uses it as a tool to remove drudgery and the other who uses it as a tool to avoid thinking. I certainly aim to use AI for the former, but in all honesty, it can sometimes be a little hard to determine when you accidentally fall into the trap of letting AI ‘think’ for you.

This post points out that the value of a good software engineering was never about their ability to churn out code:

“For years, people have confused software engineering with code production. That confusion is now getting exposed.”

Their value was always in judgement. The ability to make sound decisions, identify missing abstractions, make trade-offs and ‘debug reality’. In our field the number of possible directions you can take are near infinite. Good judgement guides software towards a successful goal.

He does also highlight the challenge of learning good judgement as a junior engineer:

“Those skills are built through friction. Through struggle. Through getting things wrong and fixing them. Through tracing failures back to root cause. Through writing something and realizing it does not survive contact with reality.”

The problem is, experiencing friction and struggle takes time. How do we compress what too us 5-10 years into the much shorter timescale needed for agentic engineers of the future?

Who Owns the Code Claude Wrote?

SUBSTACK.COM

Considered the very widespread adoption of coding agents, it is quite surprising how many legal gray areas surround them. The very foundation of these tools is the large language model (LLM) whose very existence depends on massive volumes of training data. There is an ongoing dispute around whether their use of copyright data (including code from GitHub) is legal and considered fair use. However, this blog post touches on a completely different, and somewhat surprising legal issue.

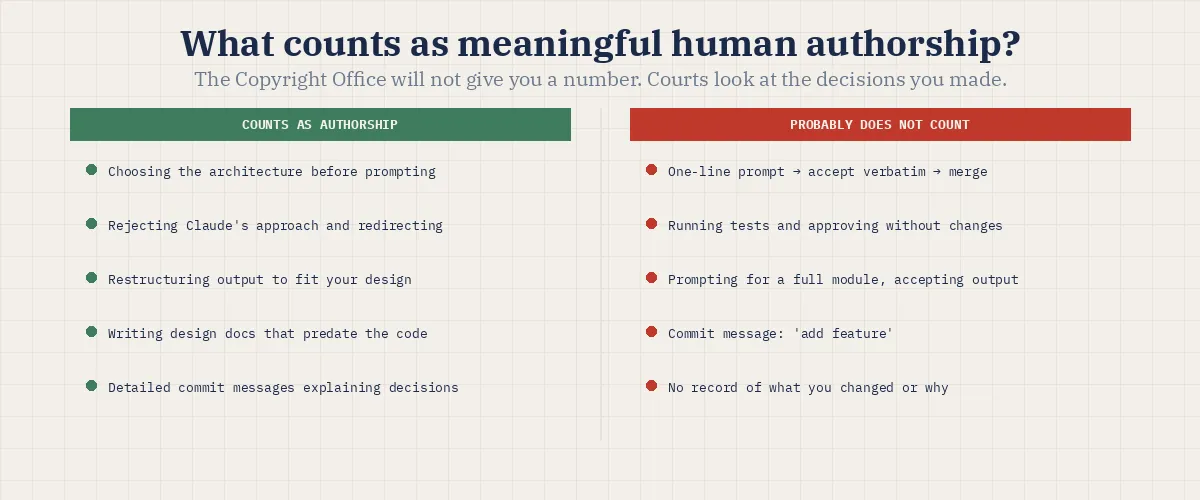

Most organisations expect to be able to protect the code they write, by means of copyright laws. However, copyright only protects work created by humans. There is an ongoing case which explores what this means for creative arts, when AI is used to generate an artwork, can the human operator claim copyright? But what about the code generated by Claude Code, Cursor or Copilot? Can that be protected by copyright?

Based on the current direction the legal system is moving in, it looks like the answer might be “no”.

“Code that Claude Code or Cursor generated and you accepted without meaningful modification may not be copyrightable by anyone. If a competitor copies it, you may have no legal recourse, because the code sits in the public domain in everything but name.”

Although “meaningful human authorship” is clearly quite vague.

This feels like another potential threat to open source, which I wrote about a few weeks ago. If you cannot copyright code, you cannot release it with an open source licence.

Interesting times!

Finetuning Activates Verbatim Recall of Copyrighted Books in Large Language Models

ARXIV.ORG

And on a very-much related note, this recent research paper demonstrates that LLMs can recall significant passages from copyright texts, with single verbatim spans exceeding 460 words. Currently LLMs have various output filters that try to block this verbatim regurgitation, but as with most things in this field, these safe-guards are far from robust.

My local agentic dev setup today

WILLEMVANDENENDE.COM

It feels like the era of subsidised inference is starting to come to an end, just this week GitHub announced that Copilot is moving to usage-based billing. As a result, there is a growing interest in local models, which allow you to decouple yourself from any future changes to billing.

This blog post provides a detailed rundown of a local agentic setup. Interestingly, this isn’t just a local model behind GitHub Copilot, instead they are using Pi, and the terminal - there isn’t an IDE in sight.

I must admit, I am very-much attached to my IDE. I tend to prefer a visual way of working, and my memory for keyboard shortcuts is weak! However, as an avid fan of Claude Code (in the terminal), I am wondering whether I should re-think and give this a go.