Ladybird adopts Rust, with help from AI

LADYBIRD.ORG

Ladybird is a new web browser, that is currently under active development. The team behind this goal have been considering alternatives to C++ for a while, but having considered Rust and Swift in the past, they decided to stick with C++. However, Rust has gradually become a more compelling option. So what to do with their current JavaScript engine (25,000 lines of code), written in C++?

Erm, that’s easy. Get Claude Code / Codex to port it.

Armed with a suite of 52,898 tests, this human-directed migration took just one week.

This is a near-perfect example of an agentic loop, where an AI agent is given a mechanism for validating its output and allowed to iterate. We’re going to see a lot more of this.

How we rebuilt Next.js with AI in one week

CLOUDFLARE.COM

Oh … and here’s another one.

Next.js is a very popular react framework. It is more than just a front-end library, with server-side rendering, routine, static site generation and more. However, unfortunately it isn’t just a collection of static files, it requires a specific type of node-based runtime environment that making it tricky to host in a serverless framework.

Cloudflare’s solution to the problem was to build vinext, a framework with the same API as Next.js, but with an improved deployment model. And by using AI, and the thousands of end-to-end tests for Next.js, an engineer was able to make significant progress in just days.

I must admit, I’m a bit conflicted here. Again, it is an amazing example of what can be done with AI, and especially and agentic loop. But I do wonder how the Next.js team feel about this? If I had a popular open source project, and someone just cloned it (changing the language, or architecture) with AI, I’d probably be quite upset. Years of crafting APIs, documentation, tests and fostering a community, cloned in weeks? And the better your engineering practices are, the better your documentation and test suite, the easier it is for you to be cloned.

Tests Are The New Moat

SAEWITZ.COM

You can probably guess what this post is about from just the title, reflecting on the Cloudflare post above, Daniel notes the uncomfortable conflict that has very rapidly emerged:

“It used to be that good documentation, strong contracts, well designed interfaces, and a comprehensive test suite meant users could trust your platform.”

…

“all of these things actually just make it easier for competing companies to re-build your work on their own foundations.”

Interestingly SQLite, an incredibly successful open source project of more than 25 years has a comprehensive test suite that they never open sourced. Very prescient.

I wonder how many open source projects are going to start removing their tests? It’s already happened with one prominent project.

How I use Claude Code: Separation of planning and execution

BORISTANE.COM

One of the things I find most interesting about AI tools, is that there isn’t a clear and obvious way that you should use them. It is up to us, the user, to discover the patterns that work. At the moment there is surprisingly little consensus on what these patterns or techniques look like.

In this blog post Boris describes a technique he has been honing over the past few months, which can be summarised as follows:

“never let Claude write code until you’ve reviewed and approved a written plan.”

The first step is to ask Claude to research a task, using keywords such as deeply, and in great details. He directs Claude to study the code, consider the tasks or requirements, building a document that is stored as a markdown file.

The next step is prompt Claude to build an implementation plan, with detailed code snippets and trade-offs. Importantly it pints to existing patterns within the code as a source of reference. This is again saved in markdown.

So far, this feels like a specification-driven development process (SDD), albeit one which is less formal than SpecKit for example.

The final part, that Boris feels adds the most value is the iterative feedback be provides to the implementation plan. He adds comments inline, then asks Claude to consider and revise the plan, often looping up to 6 times. Once complete, Claude generates a todo list then implements.

Is this a good technique? I can certainly see value in it - and I can understand why it creates a better result than just one-shot prompting.

Would I use it? I don’t think so.

My feeling is that this type of technique tells us more about us than it does about AI. Boris clearly spends time doing a lot of up-front thinking and analysis, and the technique he has developed, further supports this through AI. Whereas I prefer to take the shortest path to working software, and shape it, iteratively, from that point.

Neither approach is right or wrong. We’re just different, and that’s ok!

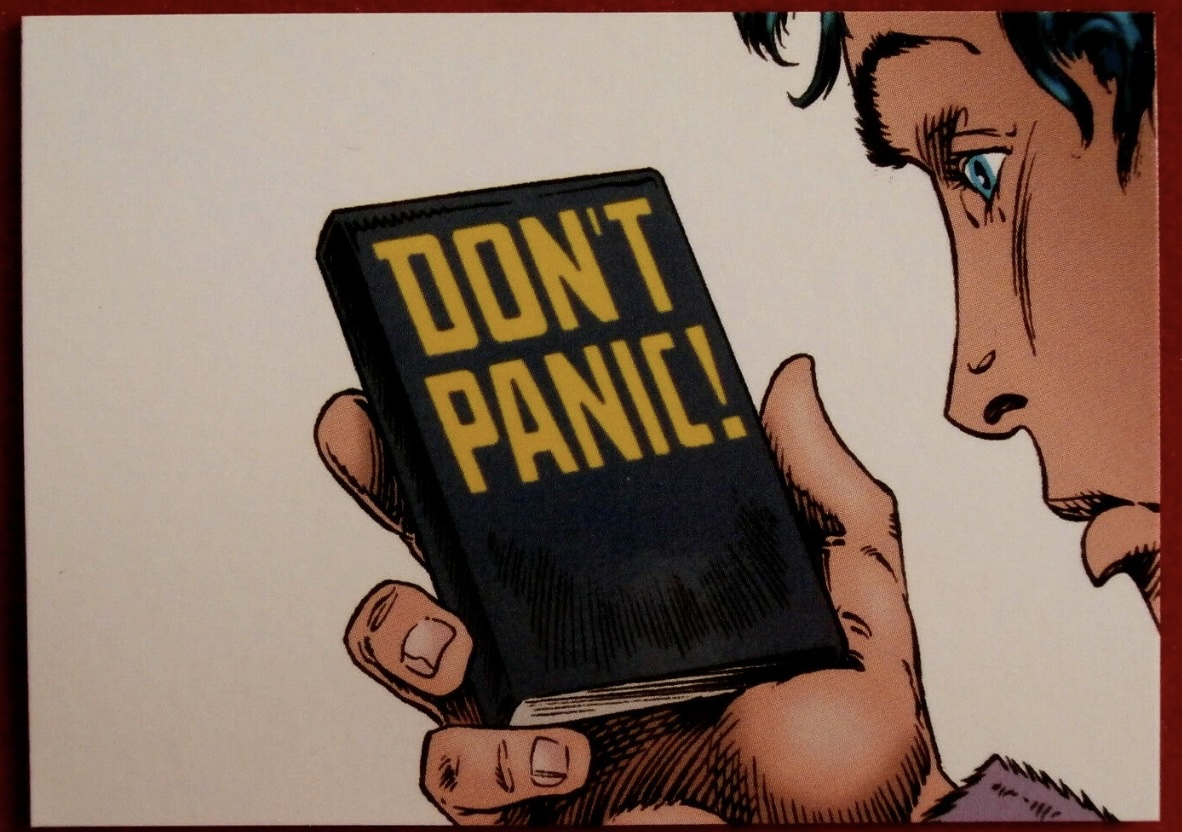

AI? Don’t Panic!

TTULKA.COM

The last few months there have been a lot of posts from (typically senior) software engineers expressing feelings of loss, mourning and unease about the future. A growing fear that what they enjoy most about the job is slipping away from them. This post from Tomas tries to inject a bit more optimism.

Firstly, a reminder that AI lacks genuine intelligence. It is great at writing code, but lacks the intelligence needed in the broader field of software engineering, and is showing no signs that it will gain this intelligence. Yet despite this, we are going to experience some significant changes in our industry, programming may become more abstract and less about writing every line of code manually.

Ultimately the future of software and AI is promising if developers adapt, learn, and engage with the tools thoughtfully rather than panic.

I couldn’t agree more.